Sentient Arena Welcomes Pantera Capital and Franklin Templeton for AI Agent Testing

With participation from Pantera Capital and Franklin Templeton, Sentient has introduced Arena, an enterprise-focused platform designed for evaluating AI agent performance on real-world business tasks.

The digital assets division of Franklin Templeton, alongside Pantera Capital, have become part of Arena's inaugural cohort—a novel testing framework developed by Sentient, an open-source artificial intelligence laboratory focused on assessing AI agent capabilities within enterprise-grade workflow environments.

Through an announcement made on Friday and provided to Cointelegraph, Sentient characterized Arena as a platform emphasizing production-style benchmarking over traditional static model evaluation. Rather than relying solely on predetermined datasets for scoring agents, the system executes them through standardized enterprise-modeled tasks that incorporate lengthy documentation, fragmented information and sources that may present contradictions.

"In this initial phase, participation refers to supporting the Arena program and developer cohort," Oleg Golev, product lead at Sentient Labs, told Cointelegraph.

According to Golev, participating partners are contributing to the definition of "production-ready reasoning" for tasks heavy on documentation, such as analytical work, compliance verification and operational processes. Financial commitments specifically linked to this initiative have not been disclosed by the participating companies.

This platform debut arrives at a time when business enterprises are rapidly integrating AI agents into their research and operational processes, despite the fact that governance structures remain underdeveloped.

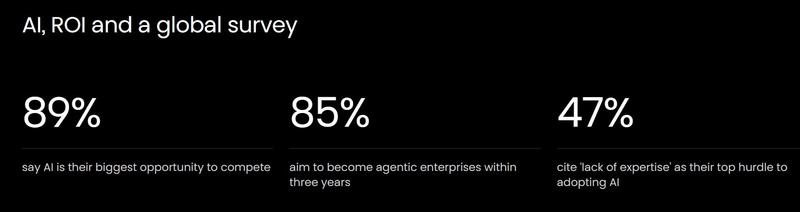

Based on findings from the Celonis 2026 Process Optimization Report, which was released on Feb. 4, approximately 85% of senior business executives surveyed indicated their intention to transform their organizations into "agentic enterprises" over the next three years, yet a mere 19% are presently utilizing multi-agent system architectures.

Production-style evaluation, not static scoring

In Golev's explanation, Arena functions as a collaborative platform where software developers can submit their AI agents to undergo standardized task evaluations and compare outcomes within uniform testing parameters.

The system monitors various failure classifications including hallucination occurrences, absent supporting evidence, citation errors and gaps in logical reasoning, which enables developers to identify and diagnose patterns in problematic issues.

Plans for Arena include the publication of comparative performance data via a publicly accessible leaderboard, as well as the distribution of postmortem analyses that outline prevalent failure patterns and their corresponding solutions.

Technology infrastructure partners such as OpenRouter and Fireworks are providing inference computational resources for the inaugural developer cohort, with additional partners contributing support for development tools and educational workshops.

Governance layer amid rising AI autonomy

This development surfaces as organizations in the financial and cryptocurrency sectors conduct experiments with granting artificial intelligence systems expanded economic independence.

Earlier this week on Wednesday, MoonPay unveiled infrastructure capabilities that allow AI agents to establish cryptocurrency wallets and carry out stablecoin-based transactions.

The following day on Thursday, executives from Stripe issued cautionary statements suggesting that blockchain networks may require substantial scaling enhancements should AI-driven commercial activity experience significant growth.