X rolls out three-month monetization suspension for creators who fail to label AI-generated war content

Content creators who share artificially generated conflict videos on X without proper labeling face a 90-day suspension from the platform's monetization program.

The social networking platform X has announced it will implement a 90-day suspension from its creator revenue-sharing program for users who publish artificially intelligence-created videos showing armed conflicts without providing clear disclosure that the material was generated using AI technology.

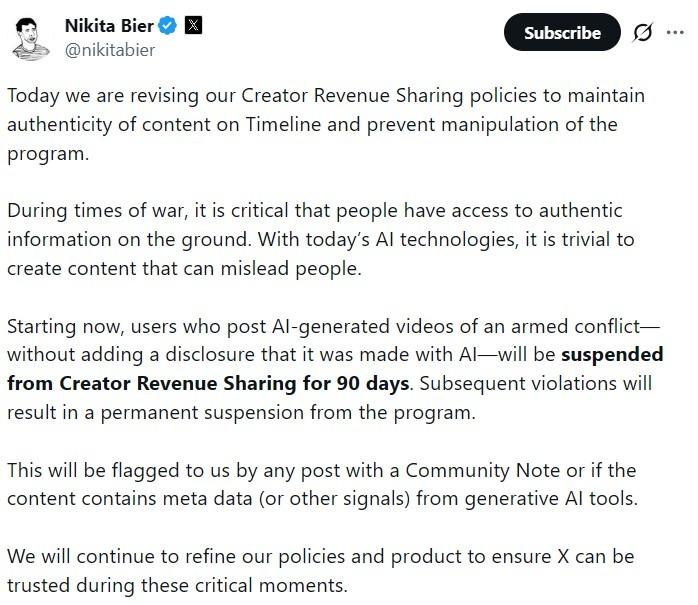

X's product chief, Nikita Bier, announced the policy on Wednesday, explaining that the regulation is designed to preserve the "authenticity of content on Timeline" amid military conflicts, when false or deceptive media has the potential to circulate rapidly across the platform.

"During times of war, it is critical that people have access to authentic information on the ground. With today's AI technologies, it is trivial to create content that can mislead people."

Nikita Bier

This policy update introduces monetary consequences to X's current content moderation framework, creating a direct connection between proper disclosure of artificially generated media and creators' ability to earn revenue on the platform.

Monetization enforcement tied to AI disclosure

In contrast to conventional content moderation approaches like warning labels or content deletion, this new regulation focuses on the platform's creator monetization system by limiting revenue-sharing access when policies are violated.

According to X, content creators publishing conflict videos generated by artificial intelligence must provide transparent disclosure that the material was produced using AI technology. Those who fail to meet this requirement could face suspension from the monetization program lasting 90 days.

The enforcement mechanism will be activated when posts are identified by Community Notes or when metadata and other identifiable markers from generative AI platforms are detected by the system.

Content creators who habitually share undisclosed AI-generated videos depicting military conflicts could be subject to permanent exclusion from X's revenue-sharing program for creators.

The new regulation specifically targets video content showing armed military conflicts and should not be interpreted as a comprehensive prohibition on all AI-generated material shared on the social media platform.

Middle East conflict raises misinformation concerns

This policy announcement arrives at a time when geopolitical conflicts in the Middle East region remain a central focus of conversations and debates occurring across various social media networks.

On Feb. 28, the United States and Israel launched joint airstrikes on Iran. Bitcoin (BTC) briefly dropped to about $63,000 but later recovered. At the time of writing, it traded near $70,000, according to CoinGecko.

Artificial intelligence technology is also gaining increased integration into contemporary warfare and military operational contexts. On March 1, the US military used Anthropic's Claude AI model to assist with intelligence analysis and targeting during operations linked to the Iran strikes.